Today, OpenAI officially launches two new models: o3 and o4-mini – the most advanced versions in the “o” model series, trained to think longer before answering. These are the smartest models ever released, enabling ChatGPT to handle complex tasks with deep reasoning capabilities and proactive tool usage.

For the first time, these models can use the full suite of tools in ChatGPT: web search, reading and analyzing files via Python, processing image inputs, and generating images. They are designed to autonomously decide when and how to use tools, responding quickly (often under 1 minute) in the appropriate output format.

🚀 Key New Features

o3-mini

- The most powerful reasoning model to date

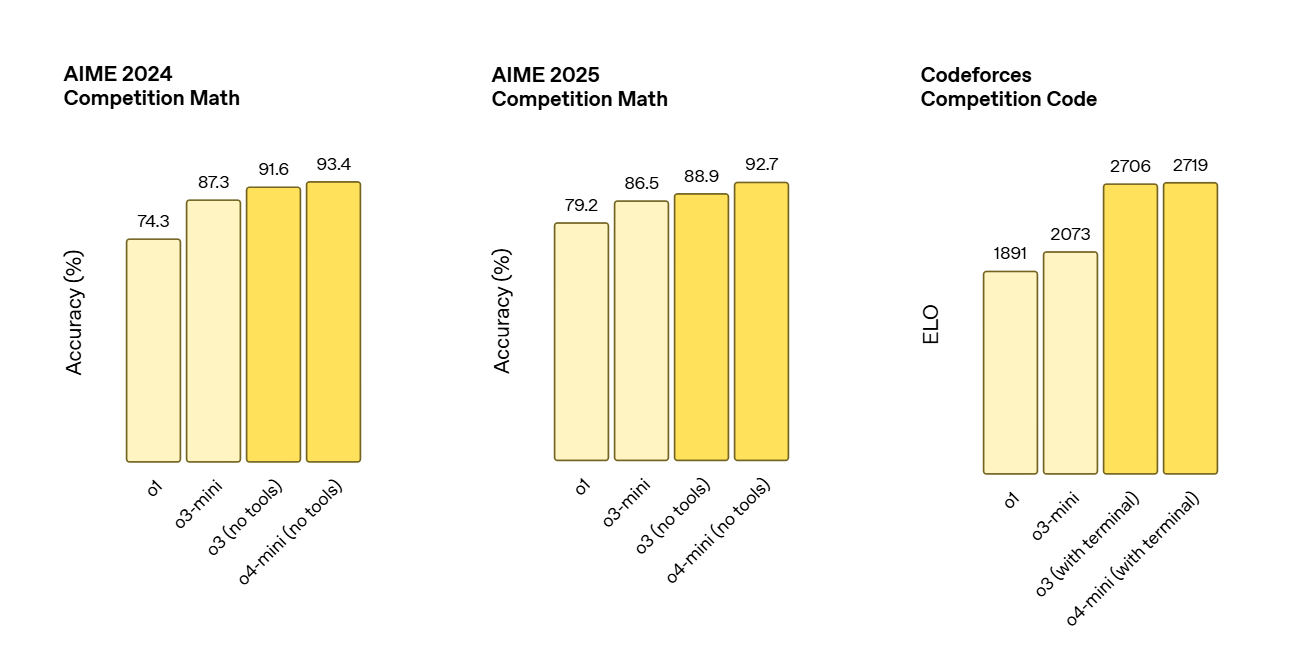

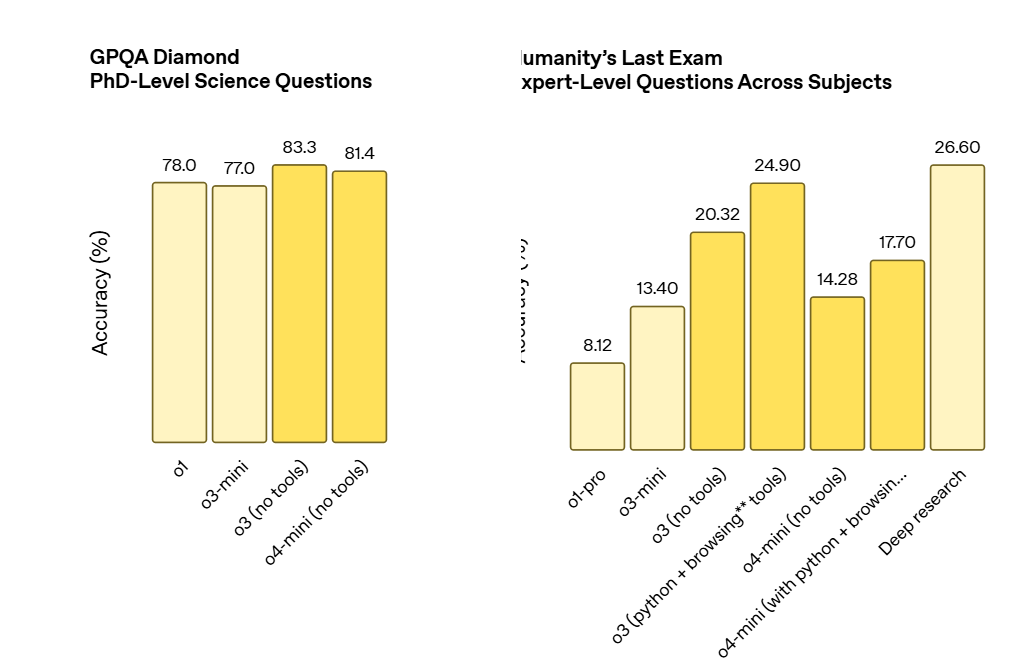

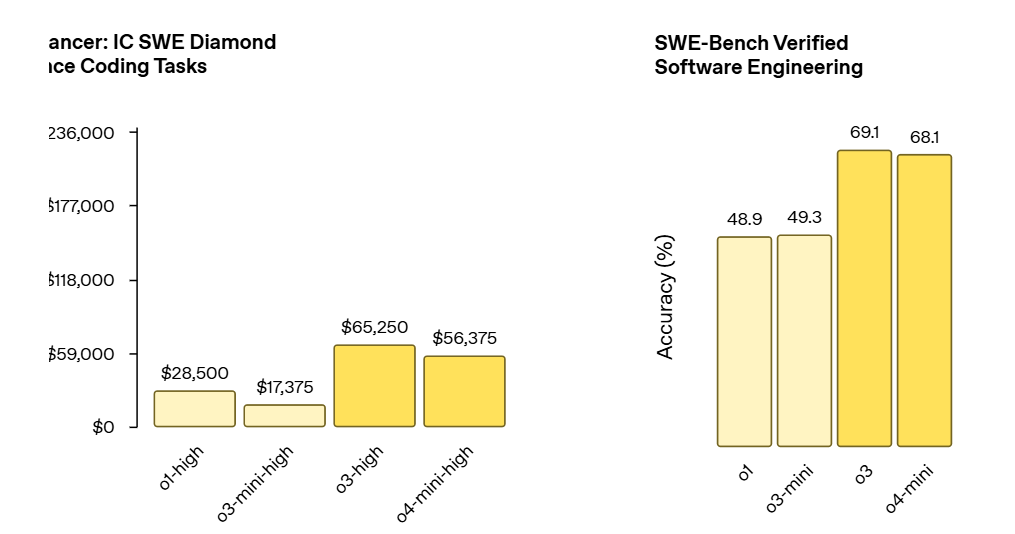

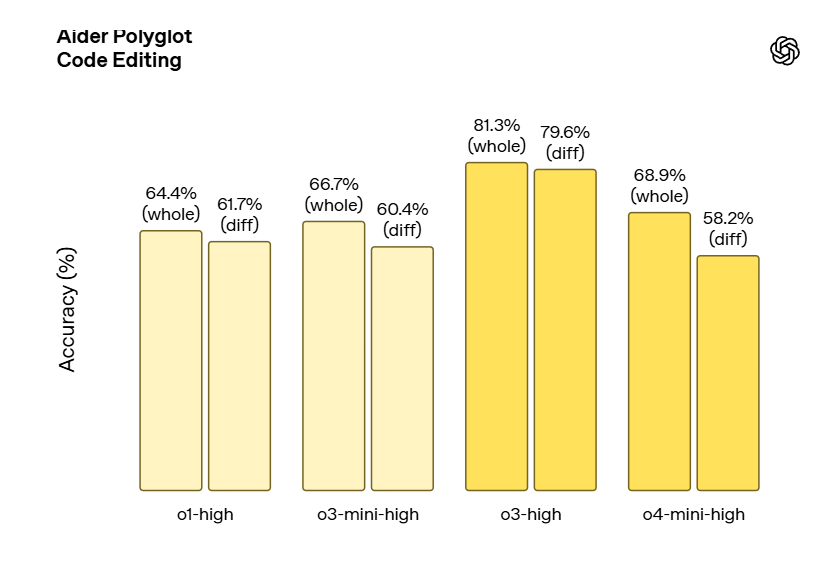

- Sets new records on benchmarks such as Codeforces, SWE-bench, MMMU

- Extremely strong at analyzing images, charts, and graphs

- Reduces serious errors by 20% compared to o1 on real-world tasks

- Highly rated in fields such as programming, creative thinking, biology, mathematics, and engineering

o4-mini

- Compact model, optimized for speed and cost

- Impressive performance on AIME 2024–2025 using Python (99.5% pass@1)

- Outperforms o3-mini in both STEM and non-STEM tasks (such as data science)

- Allows higher usage limits than o3, suitable for high query frequency

Multimodal

Coding

🧠 Visual Reasoning

- Can directly integrate images into reasoning chains

- Understands blurry images, handwritten boards, textbooks, or sketches

- Can rotate, zoom, and edit images during reasoning

- Leads in multimodal tests

🔧 Using tools like a true agent

Example: the question “How does electricity consumption in California this summer compare to last year?”

→ o3 can:

- Search for public utility data

- Write Python code to generate forecasts

- Create charts, analyze trends

- Flexibly connect tools, proactively seek additional data if needed

⚙️ Optimizing efficiency and cost

- o3 is smarter and more cost-effective than o1

- o4-mini is significantly more efficient than o3-mini

- For most real-world scenarios, o3 and o4-mini are both smarter and cheaper than previous models

🔒 Safety and control

- Fully updated safety training data

- Enhanced ability to refuse to answer sensitive content (bioweapons, malware, etc.)

- LLM monitoring system detects ~99% of dangerous red-team challenges

- Thoroughly evaluated for self-learning AI, network security, and biology – not at high risk levels

💻 Codex CLI – Direct reasoning from the command line

- Lightweight coding agent that can run directly in the terminal

- Supports sending images, drawings, screenshots for combined local code reasoning

- Open source on GitHub

- $1 million USD grant fund for projects using Codex CLI (API credits $25k per project)

🔓 Access and distribution

- ChatGPT Plus, Pro, and Team users can choose o3, o4-mini, o4-mini-high

- Enterprise and Edu users will have access after 1 week

- Free users can try o4-mini via the “Think” button

- API fully supported via Chat Completions and Responses

- Responses API will soon support tool integration: web search, file search, code writing

Source: https://openai.com/index/introducing-o3-and-o4-mini/